I’ve finally been granted access to test DALL-E 2, a “new Artificial Intelligence (AI) system that can create realistic images and art from a description in natural language.”

I’m happy to share with you some of my search results and strategies, as well as discuss how this kind of disruptive technology may soon make us stock photographers redundant.

How does DALL-E 2 work?

DALL-E 2’s algorithm has ‘learned’ how descriptive text relates to images. Without getting overly technical, the system uses a process called ‘diffusion’, where it starts with a pattern of random dots and gradually changes the pattern of dots toward an image ‘when it recognizes specific aspects of that image.’

Sometimes the buyer doesn’t know what they want

Before getting started with examples, it’s important to note that a positive premise behind the use of AI by stock photo buyers is that few have a clear idea of the images they may want. For many, it would be easier to simply write a long detailed sentence and make the software create for the buyer. Rather than using the same search sentence and not receiving any returns as you can see below with my very specific search.

This premise can also work positively in the case of contributors who are at still brainstorming stage and experimenting with different concepts / palettes / models.

Let’s get started! Having fun with image creation – 6 examples

I was kindly gifted 50 search credits to to play around with some of the different search results. Lyn Randle, a fellow Arcangel contributor also had a go and discussed about her experiences in her Twitter feed.

Example 1 – “Brazilian woman celebrating World Cup win at space station in the future”

With the backdrop of the upcoming football World Cup in Qatar set to begin in a few months’ time why not imagine Brazil winning the World Cup in the future, perhaps celebrating from a space-shuttle? Why not!

I particularly liked the second image from the left, so the software gave me some variations.

The faces look a little weird, I must admit, but conceptually it’s a good start and I particularly like the colours and cartoonish-feel which would work great for children’s merchandise, perhaps. That is, of course, once the quality improves.

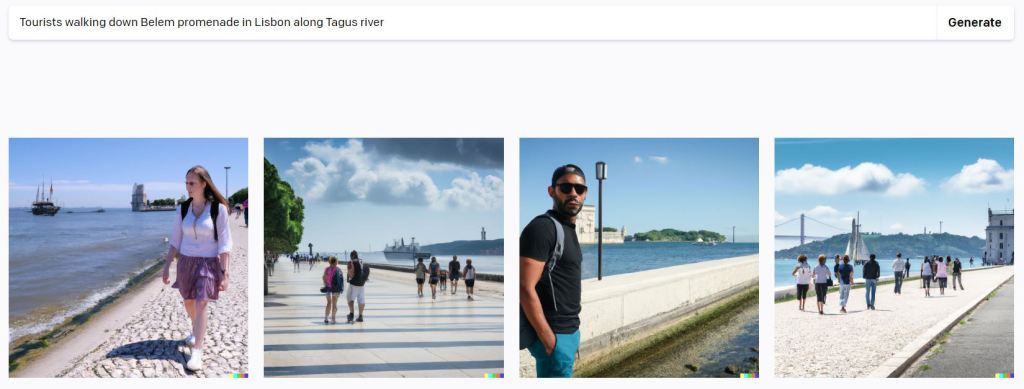

Example 2 – “Tourists walking down Belem promenade in Lisbon along Tagus river”

Next, as a travel photographer, I couldn’t help myself to see what the software would dish out, for instance from a local scene in Belem District, Lisbon.

Again, some of these look weird (especially the first one), but the last one from the left looks interesting and let’s see what I get back in terms of variations.

Now this is something I can begin to work with at a conceptual level. It’s also something that I can perhaps see from an architectural company on a concept for a new building (3D rending).

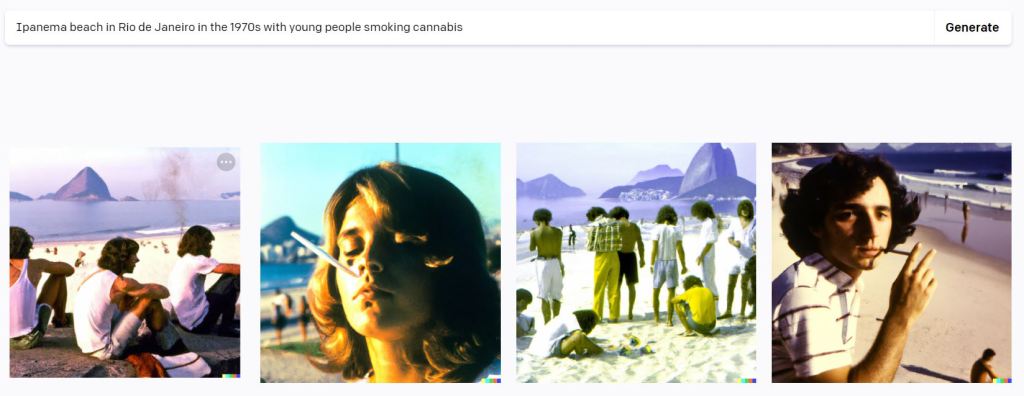

Example 3 – “Ipanema beach 1970s, young people smoking cannabis on beach”

Pretty good I must say (except for the second one), the first stands out for me and let’s see what results I get from variations.

Well, it’s not Ipanema beach but the variations clearly show the Sugarloaf Mountain, which is still cool since the city is at least identifiable. The mood is certainly there, hair-style and clothes linking it to the 1970s. Could have been one of my parents since they great up in the city during the 70s! 😀

Example 4: “Alaskan female in bikini eating gyros in Miami beach at winter“

Theo gave me this wacky suggestion to search and I duly obliged.

and then Greek football fans celebrating, of course! Any chance in Qatar, Theo?

These two above can quite easily be used for microstock – simple search with simple result.

Example 4 – “Transgender United States President in the Future”

Taking a turn towards the trending topic that is gender-politics, let’s see what the AI software comes up with…

Example 5 – “proud, overweight woman, posing, full length wearing tight clothes, feminist“

Sticking with the current body-positivity theme of today’s age, let’s see what this result yields…

I must say that these last results were the most interesting from a commercial point of view. As long as the face isn’t visible, it tends to be pretty good. These can easily be used in all sorts of microstock composites with no need for a model.

Example 6 – “dog running on beach chasing a frog“

Now looking at pets, this should produce some good results since they’re drawing from millions of existing images of pets which tends to be a popular subject.

The shadow areas look great and tons of detail on the dog itself. Impressed!

Three Arcangel Client Briefs + 1 Fun One

Now, let’s see if I can create a scene (for conceptual / brainstorming purposes only, never to submit even as a composite) as described from an actual Arcangel image brief as requested by a client and posted on their public app.

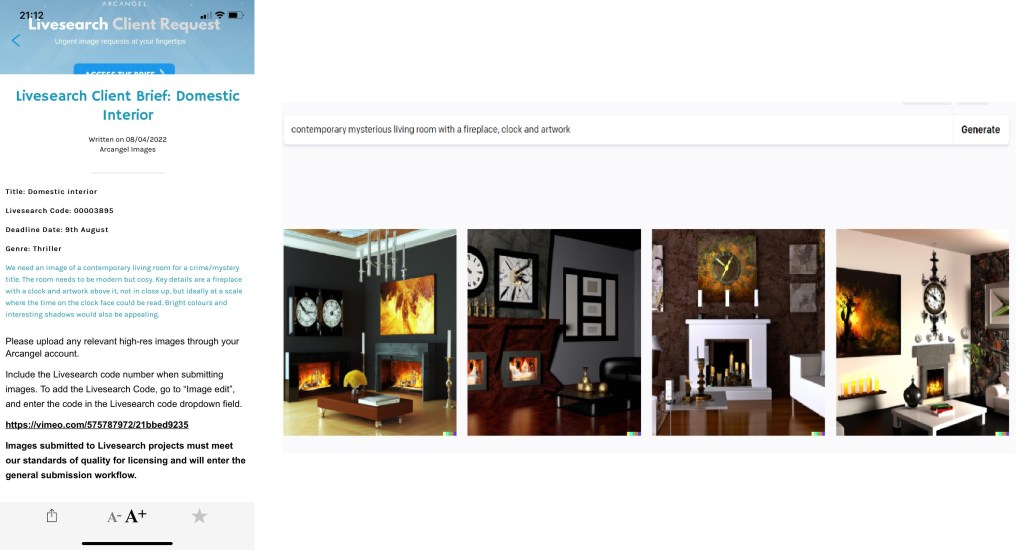

Client Brief 1: Domestic Interior

Thoughts: Too bright to work with and messy with little copy space but something to get off the starting block.

Client Brief 2: Glamourous Housewife

Thoughts: The last one has some potential, so I’ve checked for variations.

The above gives some good ideas. What do you think?

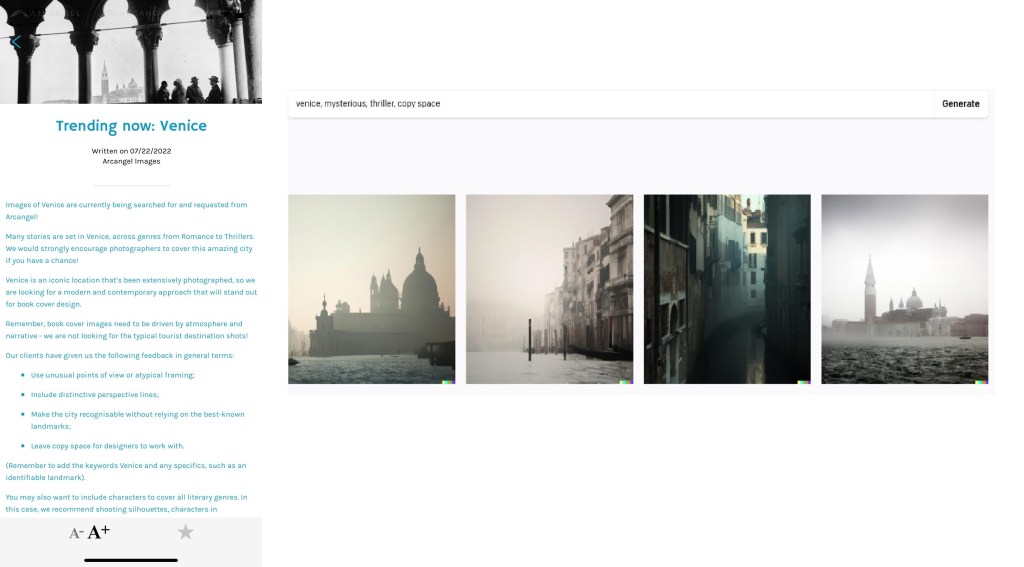

Client Brief 3: Venice

Thoughts: These all have the feel for book covers, including the much needed copy-space! As Venice is such a popular travel-destination the AI has lots of existing images to draw from.

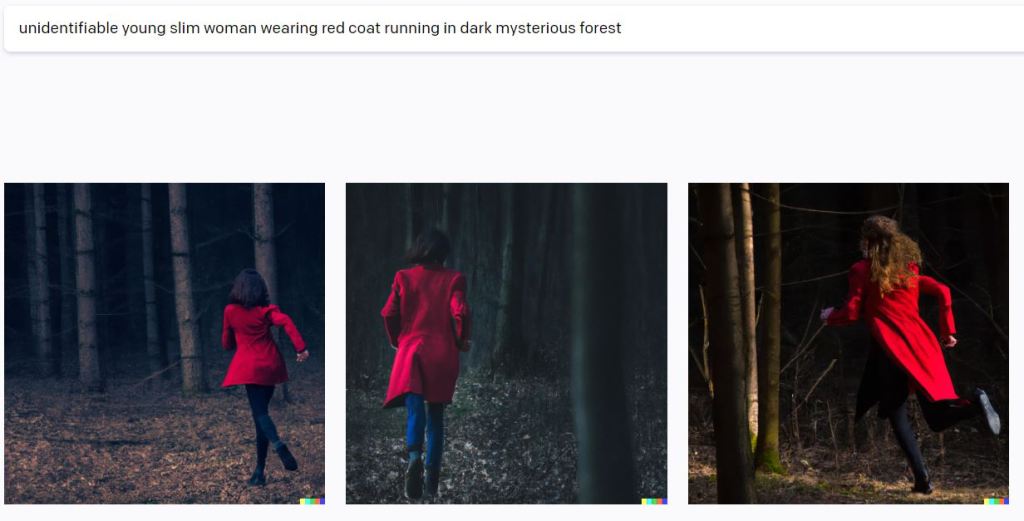

Last but not least – the cliché “Woman in red jacket running in a dark forest”

Book covers can’t be book covers without this classic, so let’s see what we get…

Thoughts: Some interesting colours and shadow areas. The models still look a bit unrealistic though.

Other Practical Implications

Loads of other implications, including image editing, as discussed in this Petapixel article.

What about legal implications of usage?

Ownership with this type of new technology is complicated. After all, the images created are not from nothing and captured from dozens/hundreds or real images with real copyright owners. If one is able to use such images to then upload to microstock agencies is an interesting question. Another question is whether agencies would allow such images within their database and how they would enforce such rules.

Arcangel, my darling book-cover agency, has taken a strict approach towards AI images being submitted by contributor. Nash Ignacio wrote on their app the following in regards to AI images:

“We have recently had a number of contributors ask about the emergence of Artificial Intelligence artwork, as well as some submissions to the library. I wanted to clarify our position on this, to avoid any confusion moving forward.

We won’t be accepting any query-based, computer-generated artwork into the library fo ra number of reasons, which I’ll outline below.

Arcangel Images is about quality artwork, whether it’s photography or digital illustrations, ultimately it’s about creative talent and the work that goes on behind the lens or pen.

On platforms such as Midjourney, it takes only a minute to generate an interesting image by typing in some keywords. Whilst this technology is fascinating and can create some pretty nice images the reality is that it has little to do with the artist.

We have a duty of care to all our contributors who work very hard on their illustrations and photography, so we won’t allow anything into the library that devalues their work.

There are a number of other areas to note:

-> Because it’s so easy to create an AI Image, you may well see them becoming popular on a very low-value stock sites. We are the opposite so don’t compete at that level.

-> Many AI images created, even as limited parts of other images are quite easily recognisable. There are certain tells/signs and even within submissions at this stage, they were easily spotted.

-> It’s a good tool to generate ideas for yourself personally but it isn’t a piece of art that you can submit to us.

-> There are also questions around licensing. Whilst some of the earlier AI platforms allowed free usage, the newer sites are requiring paid subscriptions for certain end products, many of which will only cover an artist directly to a client i.e. a designer creating a cover image for a client for example and will not allow an image to be used to upload to a library, essentially sub licencing, which could end up in a legal issue for the contributor.

Credibility and personal branding can be severely damaged if a client identifies the use, (as we did, very quickly) of anything generated by a computer and not an artist.

Thank you for listening and hopefully this clears up any questions around Midjourney and any other query-based AI platforms.”

Nash – Sales Director

For a more detailed legal / licensing perspective, check out Fabio Nodari’s blog post

DALL-E 2 isn’t the only Player in AI, introducing Midjourney

Midjourney is similar to DALL-E-2, although I must admit that at quick glance the effects look/feel much more artistic. I’m also keen to try out their beta version with a view to putting together another detailed review.

Here are three examples as posted on their Facebook group.

The Economist Cover

Midjourney is receiving notoriety having recently been featured in the cover of The Economist with accompanying article “How a Computer Designed this Week’s Cover”

Will DALL-E 2 make us all redundant?

There’s any interesting discussion over at the Microstockgroup Forum on the above question with, as usual, mixed opinions.

My take is that we, as stock contributors, operate in an rapidly changing environment and we must adapt accordingly. At one hand, the price of images is rapidly deteriorating, but on the other hand, thanks to technology we’re able to produce more and better-quality images than ever. That is, if we do manage to keep up by always improving our craft. We must embrace change and look for opportunities while avoiding nostalgic thoughts of the “good old days”.

So, it won’t make us ALL redundant, but it will make SOME of us that fail to adapt indeed redundant.

AI Developments in Music

In addition, it’s easy to see these AI developments in photography in isolation when in fact it’s occurring in all sectors, including music and soon within the all-encompassing Metaverse universe. Just take this neat software that creates video clips based on lyrics, although as the creator has explained, still required considerable human input.

Loads of other sectors that AI will apply, including transportation and marketing…all very fascinating and all very beyond the scope of this post, which I hope you’ve enjoyed. Please comment below!

About Alex

I’m an eccentric guy, currently based in Lisbon, Portugal, on a quest to visit all corners of the world and capture stock images & footage. I’ve devoted eight years to making it as a travel photographer / videographer and freelance writer. I hope to inspire others by showing an unique insight into a fascinating business model.

Most recently I’ve gone all in on submitting book cover images to Arcangel Images. Oh and also recently purchased a DJI Mavic 2s drone and taking full advantage.

I’m proud to have written a book about my adventures which includes tips on making it as a stock travel photographer – Brutally Honest Guide to Microstock Photography

Leave a comment